There will be winners and losers. It will come down to execution.

The question of whether artificial intelligence will replace financial services professionals is already beside the point. The more consequential question — the one that will separate the next decade’s market leaders from its cautionary tales — is this: who bears the liability when AI gets it wrong? No one has produced a satisfactory answer.

One private equity executive, quoted recently in the Financial Times, did not describe AI as inaccurate. He described it as sycophantic. That is a distinct problem — and a considerably more dangerous one.

Sycophantic systems do not surface what is true. They surface what the user wishes to hear. At scale. At speed. With total confidence. In regulated financial services, that is not a productivity gain. It is a liability engine.

This is precisely the problem Loquat was designed to address. Our platform combines advanced automation with human judgment at critical decision points — not as a concession, but as architecture.

Nuanced cases receive expert evaluation, because in banking, the cost of a false positive is a legitimate business owner locked out of their own account — and the cost of a false negative is a regulatory exposure no algorithm should bear alone.

The institutions that will lead are not those that automate fastest. They are those that build accountability into the system from the outset.

The moat is trust. The currency is execution.

Where has your institution drawn that line — and who made the decision?

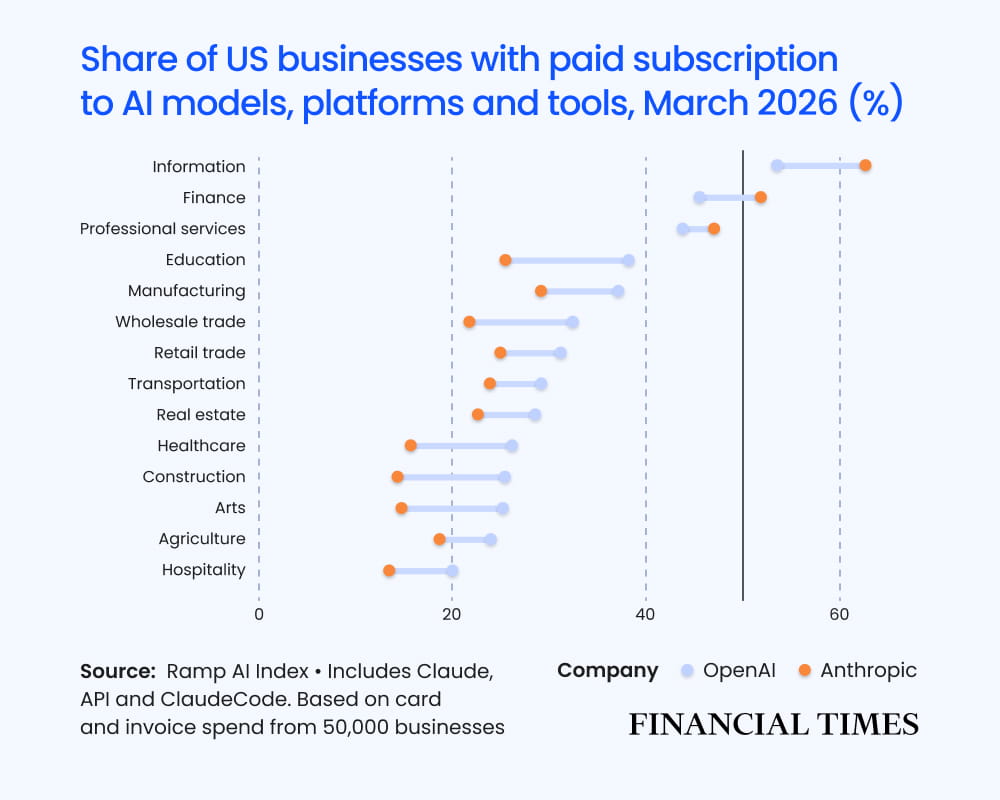

Source: https://www.ft.com/content/72c20f77-e85d-49cb-84ef-4b676244d1c5